Extracting data is an essential part of working on new and innovative ideas. But how to get big data from all over the Internet that transforms many business processes?

Common methods of retrieving data from the Internet are APIs and web scraping. In this article, we explain how these two solutions work and if there is still a better solution to the data collection problem.

What Is Web Scraping?

Web scraping is a technique for automatically extracting target data from the Internet. Scraping helps to take raw data in the form of HTML code from sites and convert it into a usable structured format. It's an invaluable tool for projects such as market research, sentiment analysis, competitive analysis or data aggregation.

We already have a detailed article about what web scraping is and what it is used for. For those who want to dive deeper into the topic, we also have articles about the legality of web scraping and its advantages and disadvantages. This will provide you with all the information you need to make an informed decision when deciding if you should use this technique in your business operations.

What is API?

Before diving into the specifics of API scraping, it's fundamental to grasp the concept of an API itself.

API stands for Application Programming Interface, which acts as an intermediary, allowing websites and software to communicate and exchange data and information.

To contact the API, you need to send it a request. The client must provide the URL and HTTP method to process the request correctly. Depending on the method, you can add headers, body, and request parameters. Then API will process the request and send the response received from the web server.

Endpoints work in conjunction with API methods. Endpoints are specific URLs that the application uses to communicate with third-party services and its users.

What is API Scraping?

API scraping is the process of extracting data from an API that provides access to web applications, databases, and other online services. Unlike extracting from a website's visual components, this method uses simple API calls to interact with a service's backend, ensuring more structured and dependable data retrieval.

APIs provide direct access to specific data subsets via dedicated endpoints, negating the need to wade through extensive raw code or HTML structure.

How Does API Scraping Work?

Collecting data via API usually involves the following steps:

- Initial Request: The scraper, or client, initiates a request to the API server with specifics regarding the requested data or action.

- Authentication: Various authentication techniques - such as an API key - are employed to ensure secure communication between the requester and server.

- Data Acquisition: Upon receiving the request, the API server processes it and returns pertinent information in structured formats like JSON or XML.

- Data Manipulation: The acquired data is then filtered, modified, and formatted as per programmatic requirements for its intended application.

Web Scraping vs. API: Which is Best?

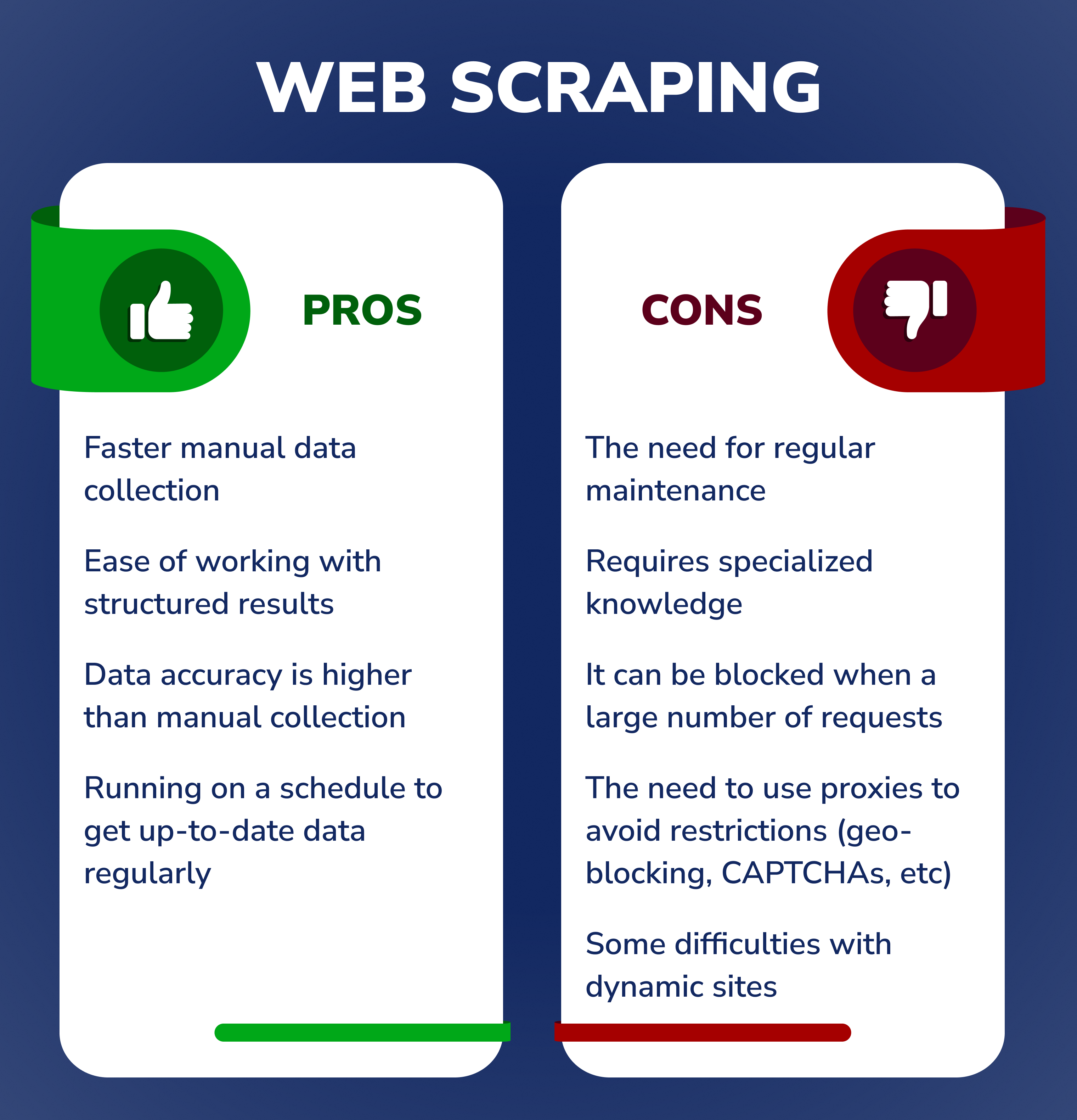

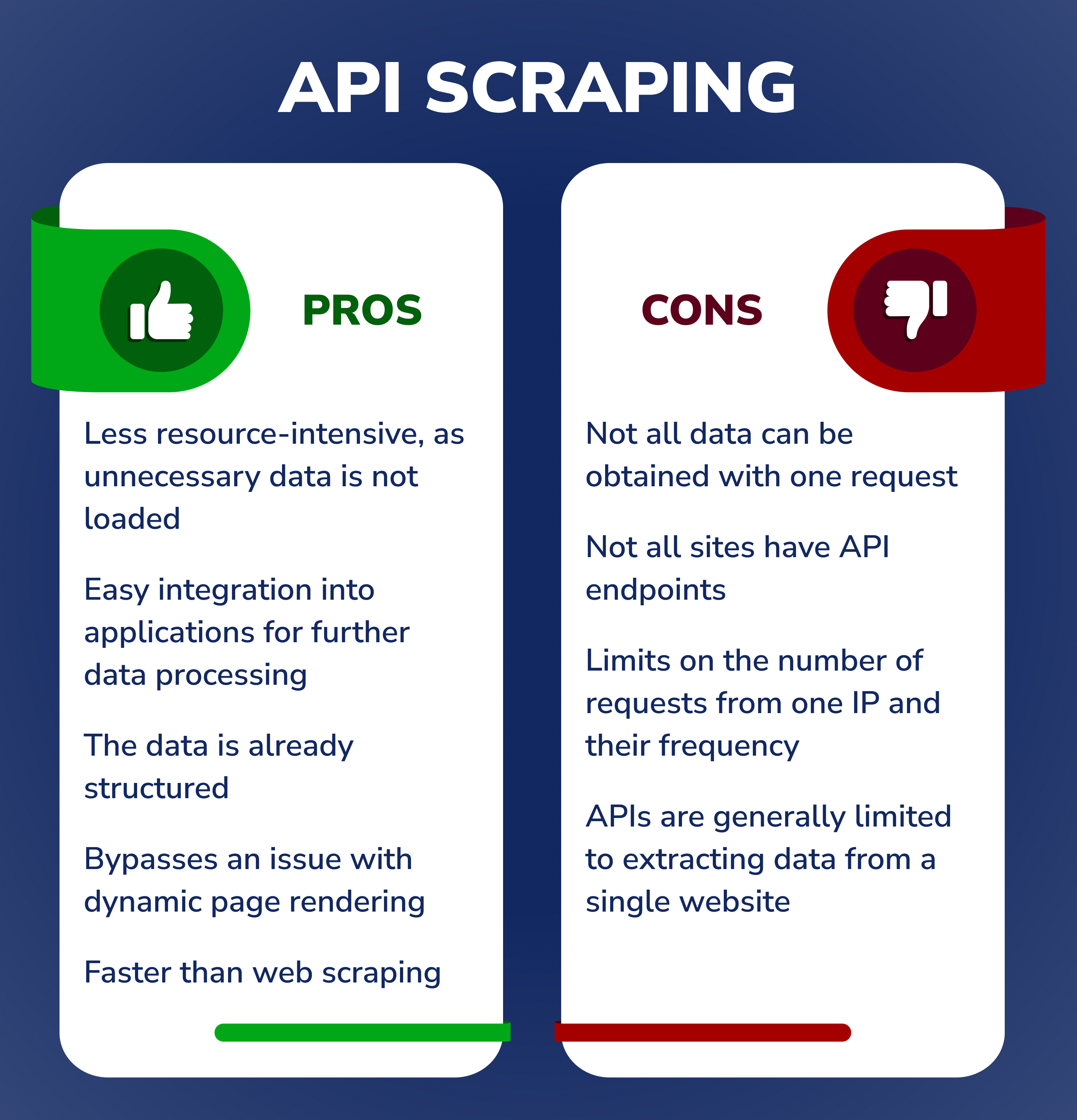

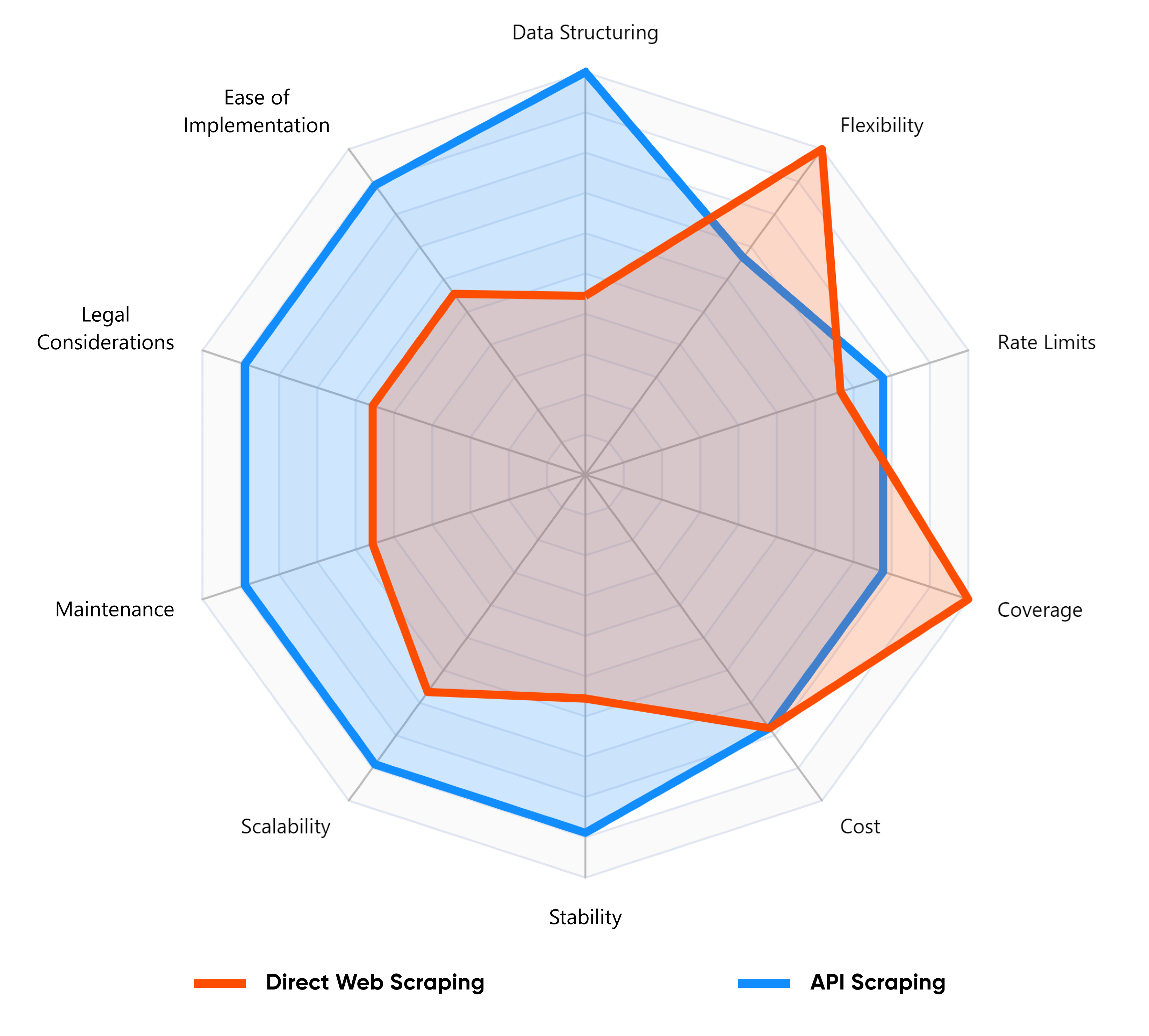

With web scraping, you have more control over how much data you want to collect and how often you want to scrape for new information. This allows for greater flexibility compared to using APIs which may offer more limited options in terms of data collection and frequency.

Both approaches can be used to collect data from websites, and which is best often depends on your specific project requirements. Web scraping allows you to extract data quickly as it only requires basic coding skills, while API access has the advantage of offering relatively fast results due to its well-defined connectivity protocols.

In summary, if quick response time or accurate retrieval of frequently changing data is necessary for a task, then an API approach might be better. However, if flexibility in accessing different types of website content is preferred over speed, then a web scraper should suffice.

Is Using an API Considered Web Scraping?

API scraping provides a different method of retrieving data from the web than traditional web scraping. API calls allow users to interact directly with a service's backend to retrieve structured data, rather than parsing raw HTML content. This approach tends to be more stable and efficient because APIs are designed to be accessed programmatically and often return standard formats such as JSON or XML.

Regarding legality, it's important to note that while API scraping is a generally accepted practice, there may still be restrictions imposed by the services that govern how you can access and use the data. Exceeding these parameters - for example, by requesting too quickly - could result in a throttling or outright blocking of the platform, so it's critical to make sure you understand and adhere to the usage policies for any APIs you interact with.

What is Web Scraping API?

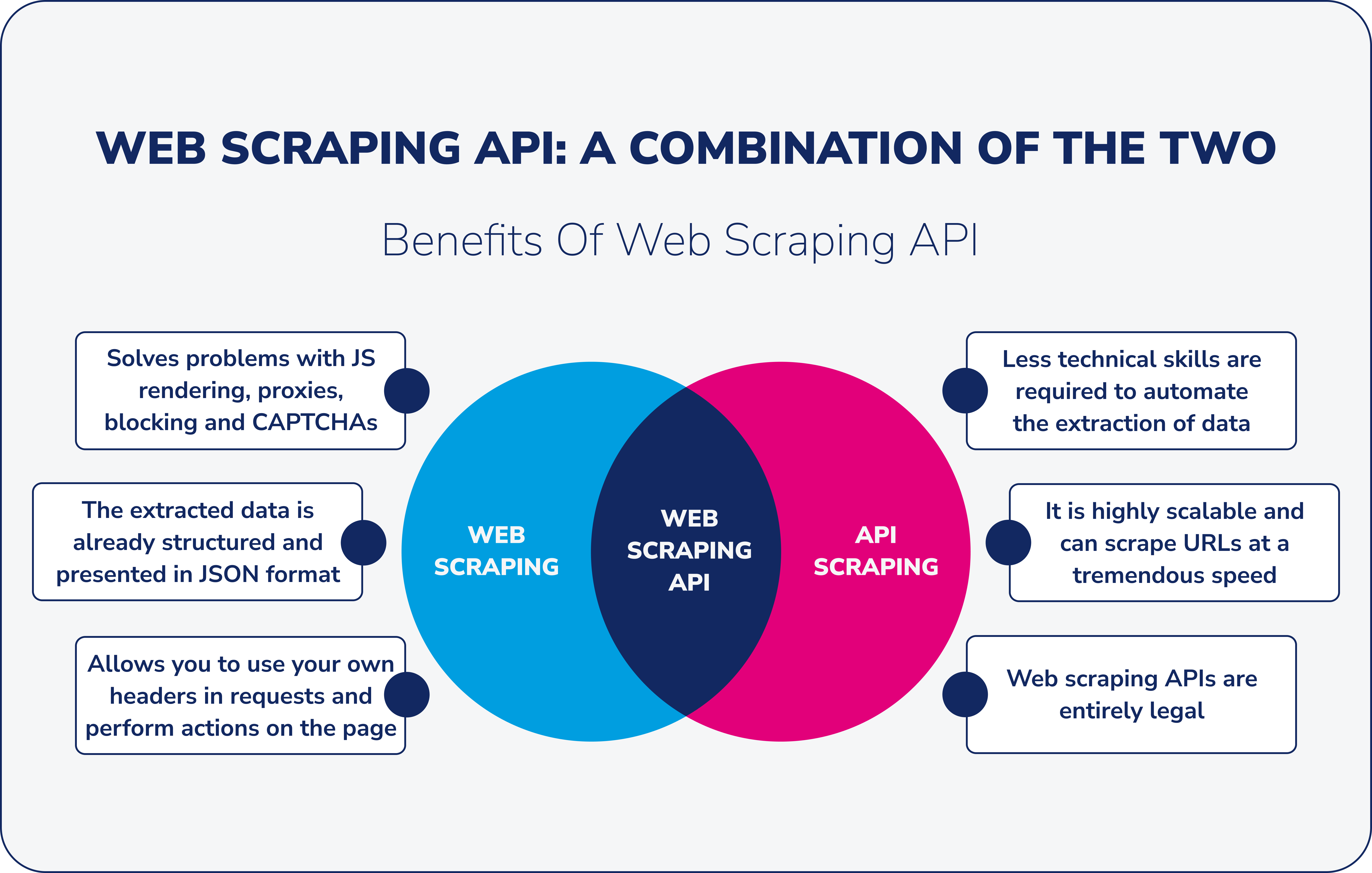

A web scraping API is a tool that extracts data from websites through API call, facilitating seamless integration into other software. It bypasses challenges like JavaScript rendering, CAPTCHAs, and blocks, offering structured, typically JSON-formatted, data.

You don't need to create a scraping application from scratch and take care of proxies, infrastructure maintenance, scaling, and so on. It is enough to make a request using the provided API and get the content of the needed web page. If necessary, you can optionally send in a request the proxy country and type, custom headers, cookies, and waiting time, and even execute JavaScript in the request.

In other words, web scraping API connects the data extraction software built by the service provider with the websites you need to scrape.

There are two main types of web scraping APIs:

- For general purposes, where services work with any web data;

- Niche-specific focuses on specific types or types of data or sources and is better suited for particular sites, webpages, applications, and other services, for example, Google SERP API or Google Maps API.

What is Web Scraping API Used For?

Web scraping API is used for various purposes like analytics, lead generation, sentiment analysis, market research, and content marketing for better ranking in search engines. It can also extract specific data from any website or blog.

Companies use this tool when there is usually no time, specialists, or budget to develop their own scraping solution, which needs to be supported and maintained.

Our Google Maps Scraper lets you quickly and easily extract data from Google Maps, including business type, phone, address, website, emails, ratings, number of reviews,… Google SERP Scraper is the perfect tool for any digital marketer looking to quickly and accurately collect data from Google search engine results. With no coding…

Advantages of Using API for Web Scraping

Web scraping API provides a more streamlined data extraction process compared to direct web scraping. While both methods aim to retrieve data from the web, using an API inherently reduces many of the challenges associated with the traditional scraping method. It acts as a bridge, ensuring that data extraction is not only efficient, but also reliable. This reliability is critical, especially when dealing with dynamic websites or sites with complex structures.

Beyond these fundamental benefits, there are several specific advantages to choosing a web scraping API over the direct approach. Let's delve into them:

- Solves problems with JS rendering, proxies, blocking and CAPTCHAs.

- The extracted data is already structured and presented as a rule in JSON format.

- Web scraping API allows you to use your own custom headers (user agents, cookies, etc.) when making requests to a website in a simple way.

- It can be used by anyone who wants to autonomously automate the tasks associated with scraping content from the web.

- Most web scraping API services are built with scalability, meaning they can scrape URLs at tremendous speed, often scanning thousands of pages per second and retrieving data daily.

- Web scraping APIs are entirely legal. However, it is better to respect the site owners and not scrape sites very quickly, as sites may not be designed for a large number of requests.

While the benefits of using APIs for web scraping are clear, it's crucial to see how they measure up to other techniques. Let's dive into a comparison of the three methods we've discussed in this article.

| Criteria | Direct Web Scraping | API Scraping | Web Scraping API |

|---|---|---|---|

| Stability | Moderate: Depends on site structure changes. | High: APIs are typically stable. | High: Combines the stability of APIs with extraction capabilities. |

| Speed | Varies: Can be slow due to reliance on loading full pages. | Fast: Direct data access without loading visual content. | Fast: Optimized for data extraction with speed in mind. |

| Technical Difficulty | High: Requires parsing HTML, handling dynamic content, etc. | Moderate: Requires knowledge of API endpoints & responses. | Moderate: Simplifies challenges of both methods. |

| Cost | Varies: Proxies, CAPTCHA solvers, and infrastructure may add costs. | Varies: Many APIs have rate limits or paid tiers. | Costs associated with both, but often offers scalable solutions. |

| Data Quality | Can be messy: Data may require extensive cleaning. | High: Structured data, often in JSON. | High: Offers structured data, optimized for usability. |

| Legal Implications | Risky: Not all websites allow scraping. | Moderate: Respect API usage terms and limits. | Moderate: Combines the legal considerations of both methods. |

| When to Use | When there's no API available or specific data is needed. | When a site offers a public API with the needed data. | When there's no public API and direct web scraping is complicated by challenges like CAPTCHAs, blocks, and JavaScript rendering. |

| Scalability | Moderate: Can be resource-intensive with large volumes. | High: APIs are built for handling multiple requests. | High: Designed for large-scale operations and multiple sites. |

| Maintenance | High: Frequent updates may be needed due to site changes. | Moderate: APIs can change but usually with notice. | Moderate: Balances the maintenance needs of both methods. |

| Flexibility | Moderate: Can be adapted but requires effort. | Moderate: Limited to API's provided data. | High: Combines the flexibility of scraping with structured data of APIs. |

| Integration Ease | Moderate: Requires data cleaning and structuring. | High: Structured data eases integration. | High: Provides structured data ready for integration. |

| Reliability | Varies: Depends on website structures and anti-scraping measures. | High: APIs are typically reliable. | High: Optimized for reliable data retrieval. |

| Coverage | High: Can access all visible content on a page. | Moderate: Limited to data the API provides. | High: Comprehensive data access combining both methods. |

| Real-time Capability | Low: Requires full page loads and potential delays. | High: Direct data access allows near real-time retrieval. | High: Optimized for rapid data extraction and real-time capabilities. |

How Does Web Scraping API Work?

-

To gather data, simply use the base API endpoint and add the URL you want to scrape as the body parameter and your API key as the header.

There are also some optional parameters that you can choose. These include custom titles, the usage of rotating proxies, their type and country, blocking images and CSS, timeouts, browser window sizes, and JS scenarios, such as filling out a form or clicking a button.

-

Send the extracted data to your own tools for further HTML processing, for example, for parsing using regular expressions and obtaining specific data in a structured form.

Our service allows you to use extraction rules to get only the data you need in JSON format without the need to save the raw data.

-

Stream data to your database. You can use your own software tools or integration platforms such as Zapier or Make. In the article about web scraping using Zapier, we wrote about this in more detail.

How to Choose the Best Web Scraping API

Choosing the right web scraping API for your specific needs can be a confusing process, so when selecting a service, here are some things to think about first:

-

The pricing structure for an optimized instrument should be transparent, and any hidden costs should not surface at a later stage. Every detail should be clearly stated in the pricing structure. So pay attention to the pricing plan and the cost per request through the data scraping API, and estimate how many pages you need to get data from.

-

When choosing a service, pay attention to the speed of data collection. After all, if you need to collect thousands or hundreds of thousands of data, you can lose much time by going to the wrong provider.

-

Some sites have anti-scraping measures in place. If you are worried about not being able to collect data when choosing a tool, pay attention to what features the service provides and how it solves problems by bypassing blocking.

-

You may encounter issues while running the web scraping API tool, and you may need help solving the problem. Here, it's worth paying attention to whether the service provides customer support because, with it, you won't have to worry about something going wrong, and you'll get a solution to your problem.

-

It is worth paying attention to whether the service provides detailed documentation. Such documentation should describe all service features and the steps that must be taken to use these features. Provided documentation should be up-to-date, have a clear structure, and be understandable to everyone.

-

Scraping different sites may require different types of proxies. Therefore, when choosing a service, pay attention to the ability to select proxy types (datacenter and residential) and geolocation settings.

-

Residential proxies use real IP addresses tied to real physical devices. Using residential proxies enables replicating actual human behaviour.

-

Datacenter proxies typically come from data centers and cloud hosting services and are used by many simultaneously. ISPs don't list such proxies, and certain security precautions may apply regarding IP addresses.

-

Closing thoughts

The importance of efficiently extracting web data cannot be overstated. While both web scraping and API scraping offer their unique advantages, the emergence of web scraping APIs represents a harmonious blend of both methods. These APIs not only streamline the data extraction process, but also circumvent many of the challenges associated with traditional scraping.

Considering the agility, reliability, and comprehensive data access they provide, web scraping APIs are proving to be the best choice for enterprises and developers. When venturing into the realm of data extraction, it's important to tailor your choice to your specific needs, but if you're looking for a robust, versatile, and efficient solution, a web scraping API may be your best bet.